Generative AI has dramatically accelerated software development. Ideas that once took weeks to turn into working code can now be prototyped in hours. AI tools generate code, suggest tests, analyze logs, and help engineers iterate faster than ever. According to McKinsey’s research on developer productivity, AI-assisted teams can complete coding tasks significantly faster, with some organizations reporting development velocity improvements of 30 to 40% in AI-supported workflows.

But something interesting is happening in many organizations: even as coding accelerates, releases still stall. Teams wait not for code to be written, but for confidence that it is safe to ship. In many cases, the blocker is not the tests themselves. It is the data behind them. In the AI era, test data has quietly become the new critical path to quality.

Consider a typical enterprise team using AI coding assistants. Development velocity improves immediately. Features move from idea to code faster than ever. But releases still slip because QA teams must wait days for masked datasets or environment refreshes. The development bottleneck is gone, but the testing bottleneck remains.

The Bottleneck is Shifting in the SDLC

AI now supports multiple stages of the software lifecycle, reducing work that once took days to mere hours. Yet validation still takes time. Teams can generate tests quickly, but they often wait on environments, data provisioning, and compliance approvals before those tests can run.

As development accelerates, constraints move downstream toward validation. Inside QA, test data and test environments are where projects most often slow down.

Why Test Data is Uniquely Hard

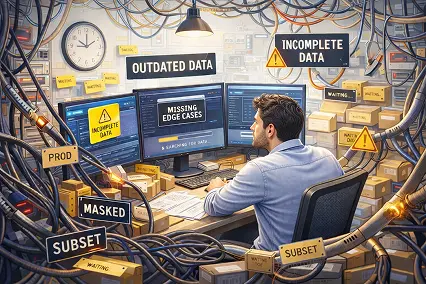

Accessing usable test data is rarely simple. Industry research consistently shows that testers spend up to 30 to 40% of their time searching for or preparing data rather than executing tests. Manual provisioning of test datasets can take days or weeks. That cadence does not match modern CI/CD pipelines or AI-assisted development.

When teams cannot access realistic data, test coverage suffers. Edge cases go untested, business workflows behave differently than they do in production, and defects slip through into live environments. These inconsistencies make tests unreliable and environments unpredictable. Furthermore, if automation frameworks and AI-driven testing tools rely on incomplete data, the automation itself becomes unreliable.

The AI Twist: More Code, More Testing Pressure

Agentic AI will generate more code changes and more test cases than any previous tooling. This increases the validation volume required in every build.

If teams run AI-generated tests on unrealistic datasets, the results can be highly misleading. A build may pass in the lab while still hiding defects that appear in production. “Green builds” do not always mean safe releases. Without production-like data, testing becomes a simulation detached from reality.

Why Traditional Test Data Management Breaks Under AI Velocity

| Feature | Traditional Test Data Management | Modern Test Data Management (AI Era) |

| Provisioning Model | Centralized teams, ticket-based requests | Self-service, on-demand generation |

| Data Source | Full database copies (heavy, slow) | Multi-source data automatically masked, blended, and pushed in QA/UAT environments |

| Speed | Days, weeks, or months | Minutes (self-service UI or integrated into CI/CD pipelines) |

| Architecture Fit | Monolithic legacy systems | Spans legacy, SaaS, and micro-services |

| Compliance / PII | Manual masking that differs by database, inconsistent enforcement | Automated, centralized PII masking and synthetic data, built-in by design |

What Good Test Data Looks Like Today

To support modern testing, data must meet three strict criteria:

- Realistic: Datasets must reflect production behaviors, edge cases, and real business workflows.

- Compliant: Personally identifiable information (PII) must be protected. Using synthetic test data (realistic but fictitious datasets generated to mirror production patterns) and advanced masking techniques helps preserve useful data characteristics without exposing real user details.

- Consistent: Enterprise applications span multiple systems. Test data must reflect accurate relationships across services, platforms, and applications.

Platforms like K2view approach this by organizing enterprise data around business entities such as customers, policies, or orders using patented Micro-Database technology. This allows teams to provision complete, consistent datasets that reflect real user journeys across multiple systems while maintaining referential integrity. Instead of stitching together multiple tools, teams combine data masking, cloning, and synthetic generation within one platform.

Don’t Let Test Data Become the New Bottleneck

The bottleneck has moved, but it hasn’t disappeared. Teams that recognize test data as infrastructure — not an afterthought — will be the ones who actually realize the promise of AI-accelerated development. The rest will keep explaining why fast code still means slow releases.

Author

Amitai Richman, Director of Product Marketing K2view Test Data Management

Amitai Richman is Director of Product Marketing at K2view, where he leads GTM strategy for enterprise AI and test data management solutions. He specializes in translating complex data and agentic AI capabilities into clear business value for enterprise buyers, with a particular focus on TDM, synthetic data generation, and AI-ready data infrastructure. Amitai has presented on AI in software testing at industry forums across Europe and the US, and is a recognized voice in the product marketing and QA communities.