A Leader’s guide to building domain-aware evaluation disciplines that turn experimental AI pilots into production-grade, auditable enterprise systems.

The AI Agent Production Gap

AI agents are moving rapidly into software engineering, testing, DevOps, support, and back-office workflows. Many organisations have running pilots; far fewer trust those agents enough to put them into production. Gartner predicts that more than 40% of agentic AI projects may be cancelled by the end of 2027 , citing cost, unclear business value, and inadequate risk controls.

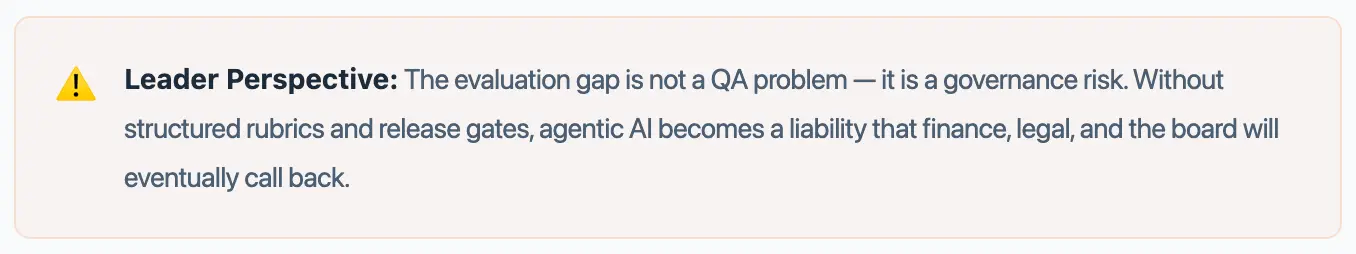

The blocker is rarely the model. It is the absence of an evaluation discipline that can answer a harder question: can this agent complete the right task, in the right context, with the right controls, consistently?

Why AI Agents Need a Different Testing Strategy

Traditional software testing assumes a known input, a fixed expected output, and repeatable behaviour. AI agents do not work that way. Outputs vary. Retrieval can return different chunks. Tool calls may take different paths. The reasoning trace shifts from one run to the next.

Evaluating an agent requires checking whether it understood the user’s intent, retrieved the right context, called the right tool, avoided hallucination, followed enterprise policy, and escalated when its confidence was low. No single generic metric covers all of this.

Existing Evaluation Frameworks Are Necessary, Not Sufficient

A strong ecosystem of evaluation tools already exists. DeepEval, Ragas, Promptfoo, LangSmith, Braintrust, TruLens, Phoenix, and OpenAI Evals each give teams real leverage on prompts, RAG pipelines, model outputs, hallucination, retrieval quality, tool calls, traces, and regression behaviour. They are essential building blocks.

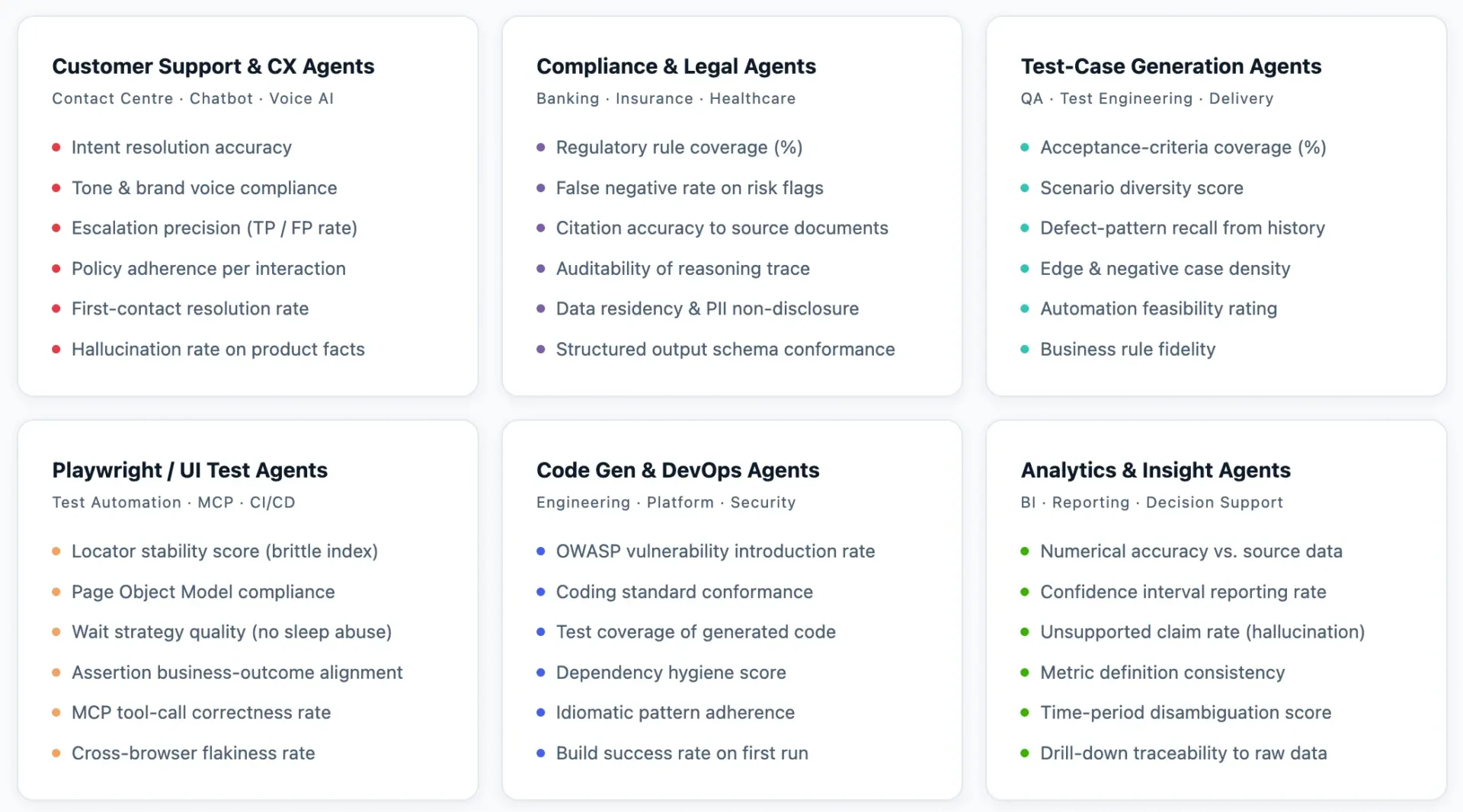

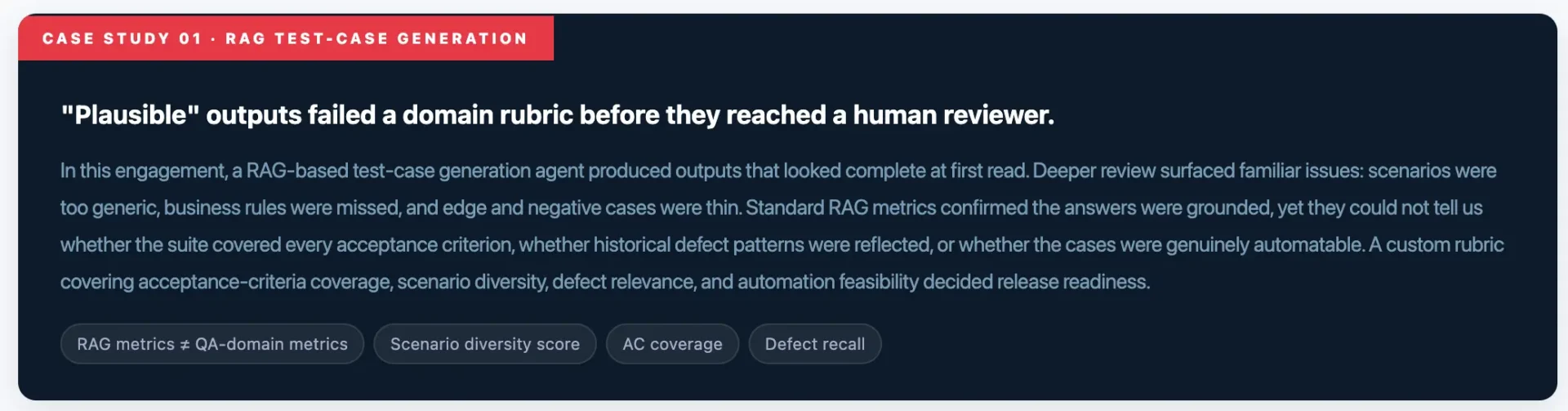

But a customer-support agent, a banking-compliance agent, a test-case generation agent, and a Playwright automation agent may all share an LLM core while having entirely different definitions of “good.” Generic accuracy and faithfulness scores cannot decide whether a generated test suite is release-ready. The evaluation tool provides the engine; the organisation must define the quality model

The Custom Evaluation Blueprint

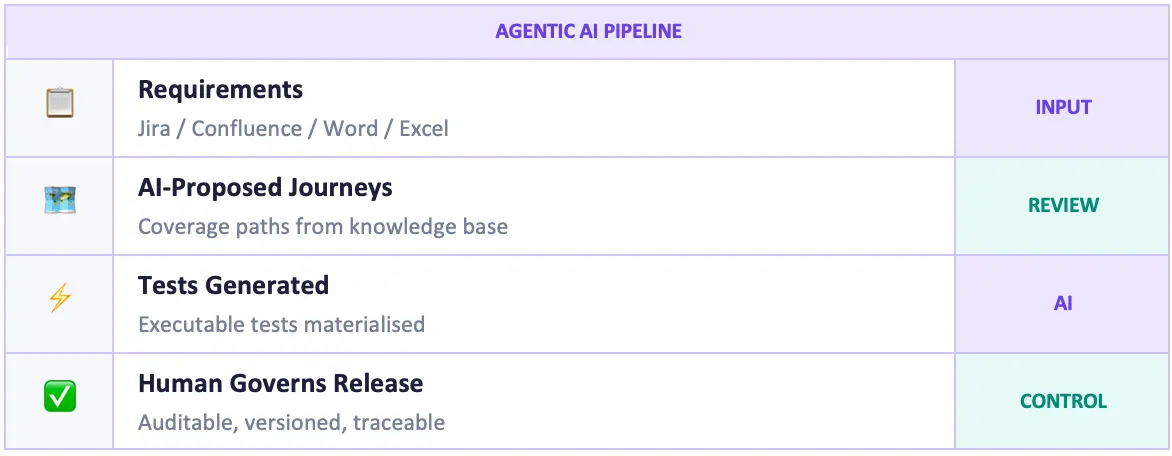

A practical custom evaluation framework can be built in seven steps:

- Define the agent’s mission Write down what the agent must do, what it must never do, and how much autonomy is allowed before a human is required. This becomes the evaluation contract.

- Build task-level evaluation datasets Cover normal flows, edge cases, negative scenarios, ambiguous prompts, high-risk domain cases, and historical production issues.

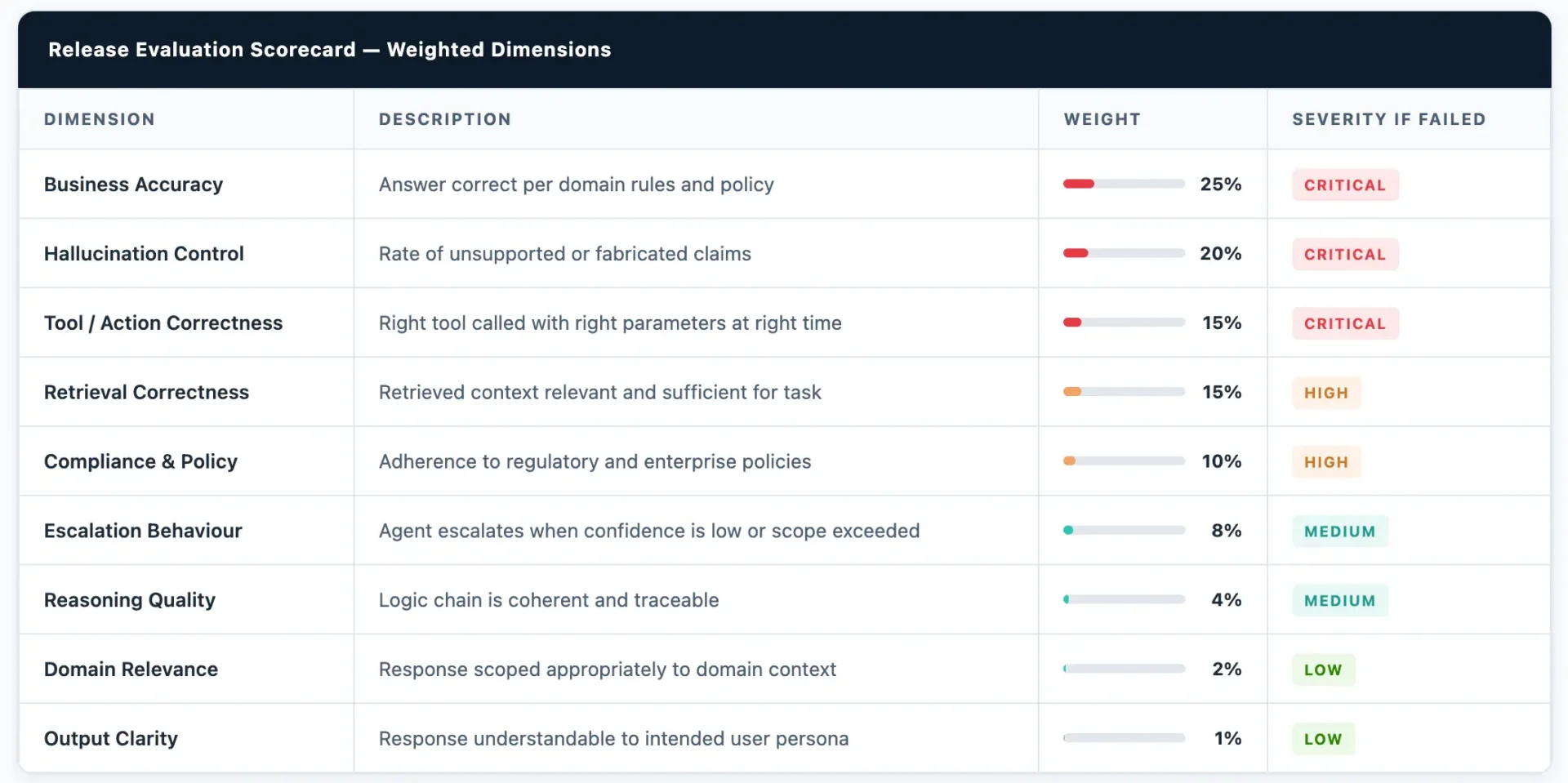

- Create domain-specific rubrics Score domain relevance, business accuracy, retrieval correctness, reasoning, tool correctness, hallucination control, compliance, clarity, and escalation behaviour.

- Apply weighted scorecards A formatting slip is low severity; a wrong business recommendation is critical; a wrong tool call may block release. Weight accordingly.

- Combine automated evaluation with human calibration Automated evaluators give scale; expert reviewers calibrate the rubric over time to account for edge cases no automated scorer anticipated.

- Run regression evaluation continuously Re-score whenever the model, prompt, RAG corpus, tool definition, workflow, or enterprise policy changes.

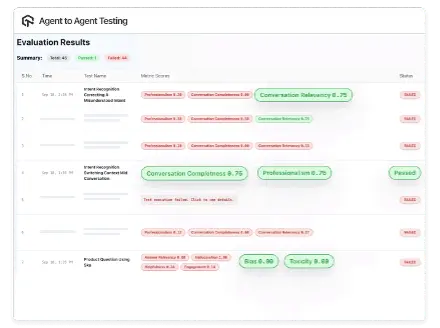

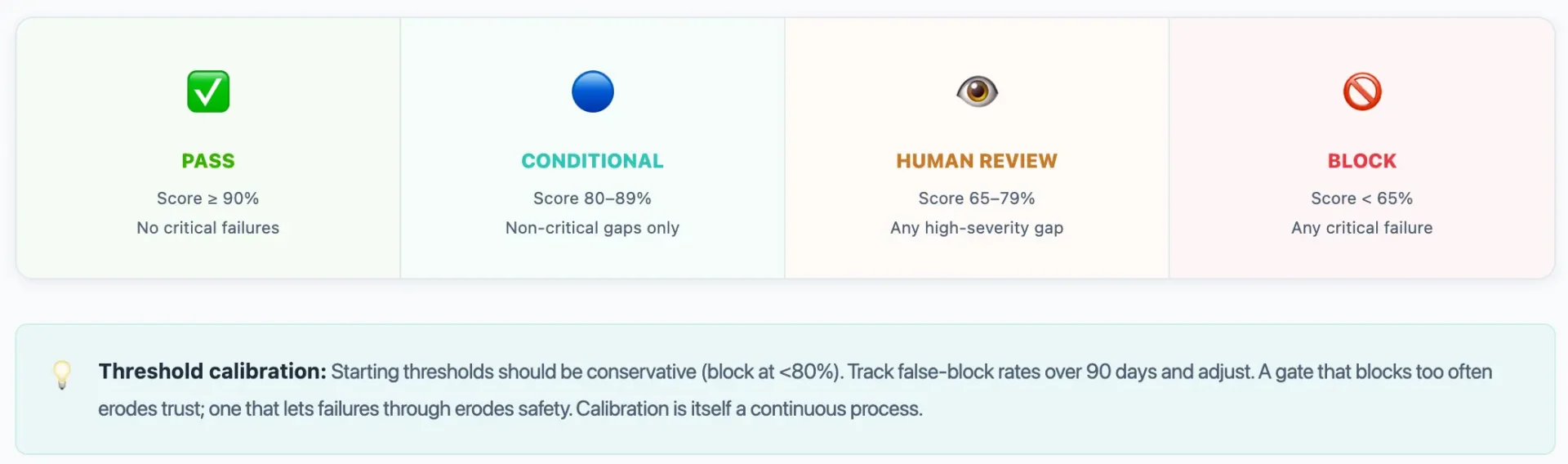

- Convert scores into release gates Pass · Conditional Pass · Human Review Required · Block — each gate tied to a clear business risk threshold.

Custom Metrics Based on Business Context

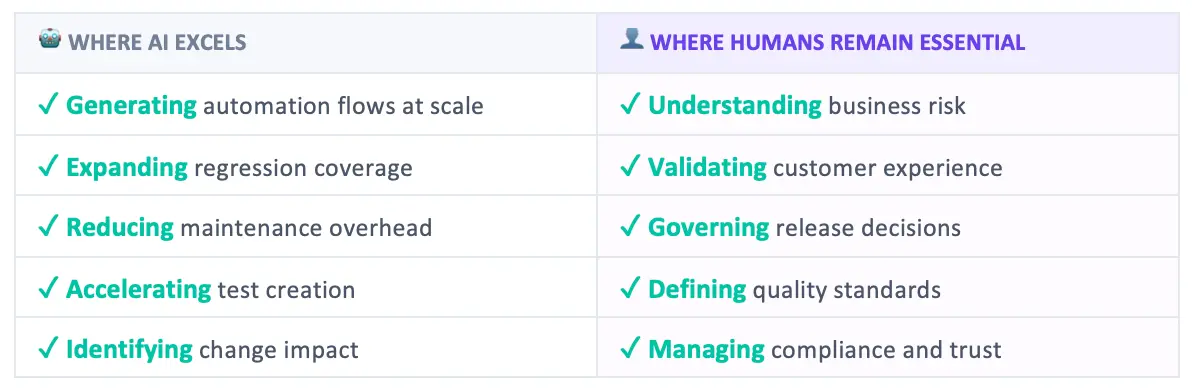

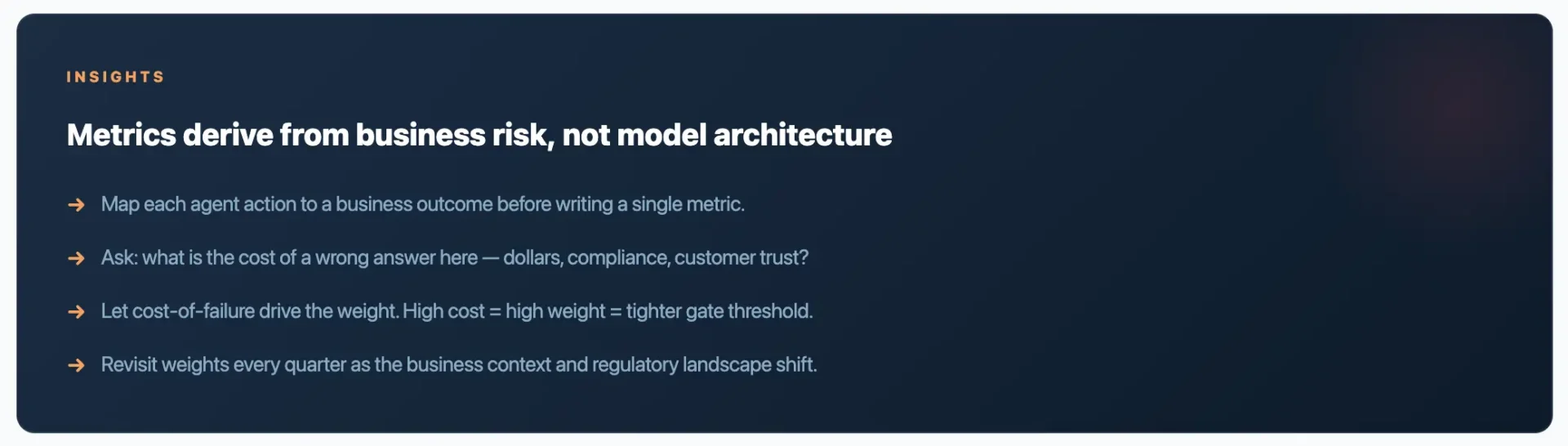

Generic LLM benchmarks measure model capability in isolation. Enterprise AI agents operate in a business context — with specific user personas, data governance requirements, integration constraints, and financial consequences of failure. The metrics must reflect that context. Below is a framework for selecting and weighting evaluation dimensions by deployment domain.

Metric Clusters by Enterprise Domain

Each domain cluster below contains the metrics that carry the most signal for that type of agent. Select the cluster that matches your deployment, then tune weights using your organisation’s risk tolerance and regulatory posture.

Weighted Scorecard: Enterprise AI Agent Release Template

The table below shows how to structure a weighted scorecard across core evaluation dimensions. Adjust weights to match your domain cluster above and your organisation’s risk posture.

Business-Context Metric Matrix

The following matrix maps enterprise agent types to their primary KPI, the hardest-to-catch failure mode, and the metric that most reliably surfaces it.

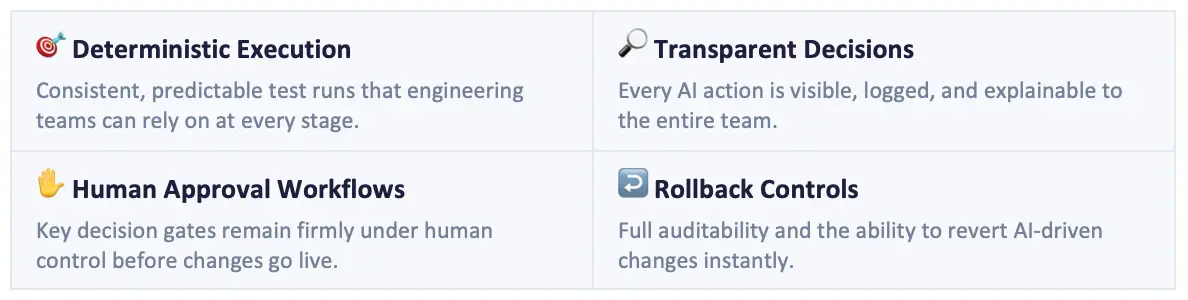

Release Gates — Translating Scores into Decisions

Every weighted scorecard must terminate in a binary business decision. The four-gate model below maps score ranges to actions and assigns responsibility for each outcome.

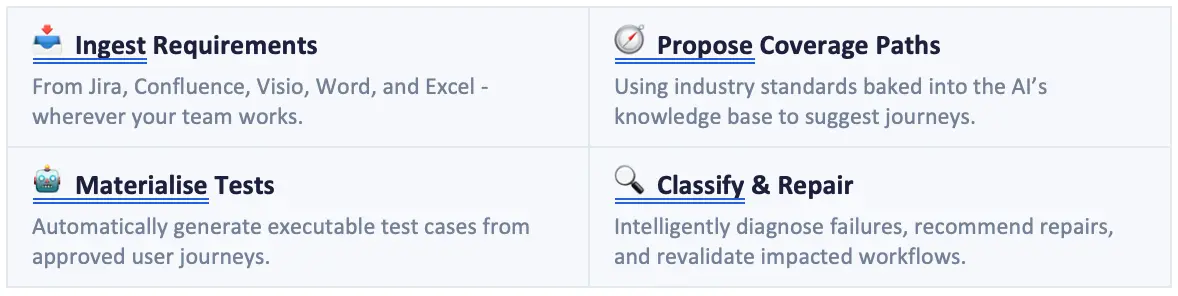

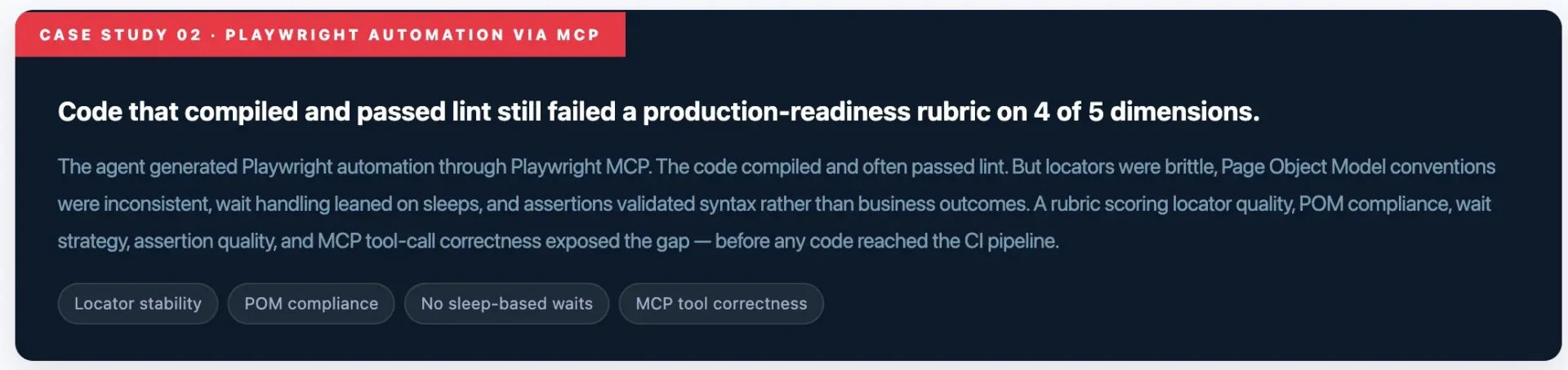

Lessons from testron.ai Implementations

What This Means for QA Teams

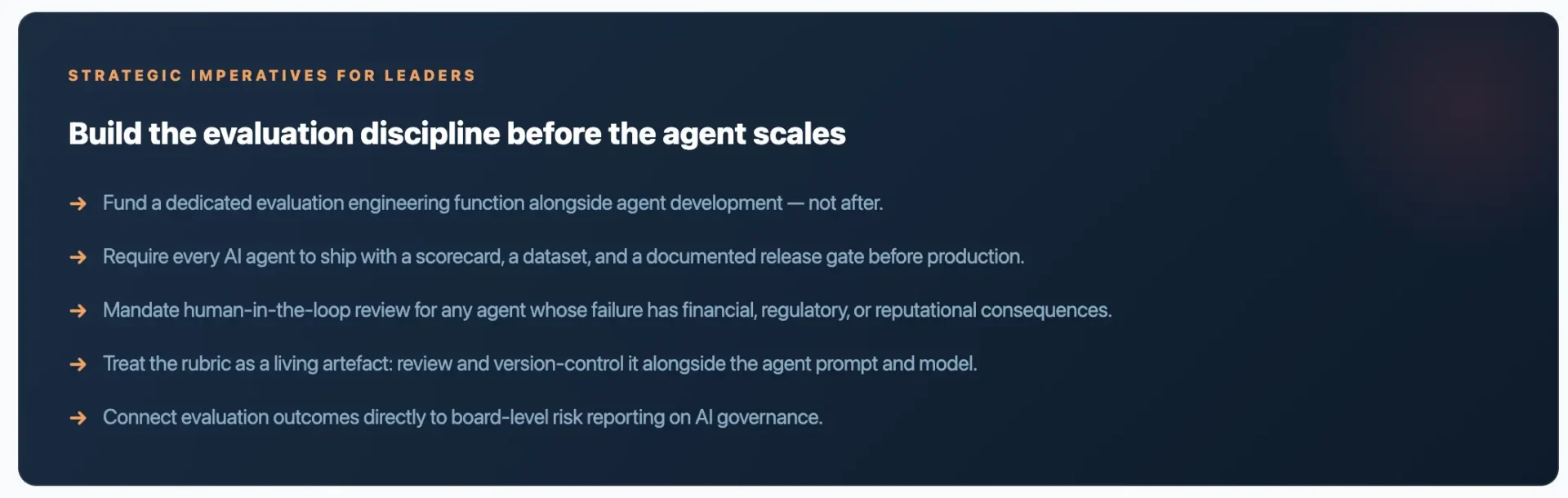

Agentic AI is reshaping the QA mandate. Test execution is no longer the centre of gravity; evaluation design is. The QA function becomes the quality gatekeeper for enterprise AI agents — owning rubrics, scorecards, regression datasets, and human-in-the-loop calibration.

The skills that compound from here are domain-aware evaluation design, structured human review, and translating business risk into release gates that engineering and the business both trust.

Closing

The future of testing is not just more automation. It is trusted AI-agent evaluation: a clear mission, a custom rubric tuned to business context, a weighted scorecard, calibrated human review, and a release gate that reflects business risk.

Teams that build this discipline now will be the ones who put agents into production with confidence — and who earn the trust of the board, the regulator, and the customer.

References:

Gartner: Gartner Predicts Over 40% of Agentic AI Projects Will Be Canceled by End of 2027 — gartner.com

DeepEval — deepeval.com

Author

Babu Manickam CTO, Indsafri

Over 27 years of experience in software testing, test automation, performance engineering, DevOps, and AI-led quality engineering. He has trained more than 50,000 QA professionals and works with enterprises to implement modern testing practices across automation, Generative AI, and agentic quality engineering. An active speaker and community contributor in the software testing ecosystem, with a strong focus on helping QA professionals transition into AI-augmented engineering roles.

Indsafri are Exhibitors in EuroSTAR 2026. Join us at EuroSTAR Conference in Oslo 15-18 June 2026.