How does the automatic execution of manual tests speed up test cycles?

On the surface, “automatic execution of manual tests” sounds like an oxymoron. The industry has always operated on a binary: manual means a human tester; automatic means a script, a framework, and an automation engineer. For decades, “manual” has been synonymous with human intervention.

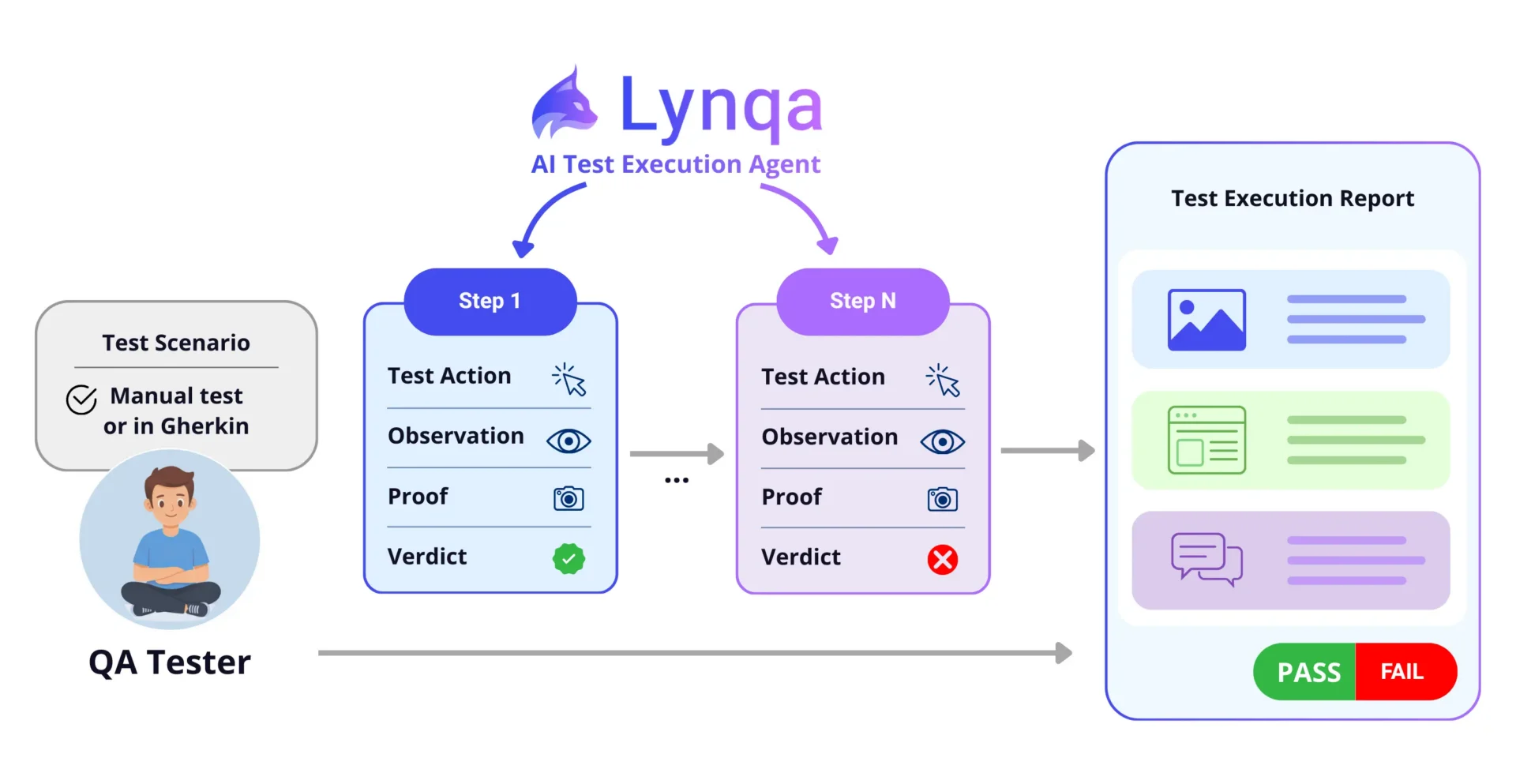

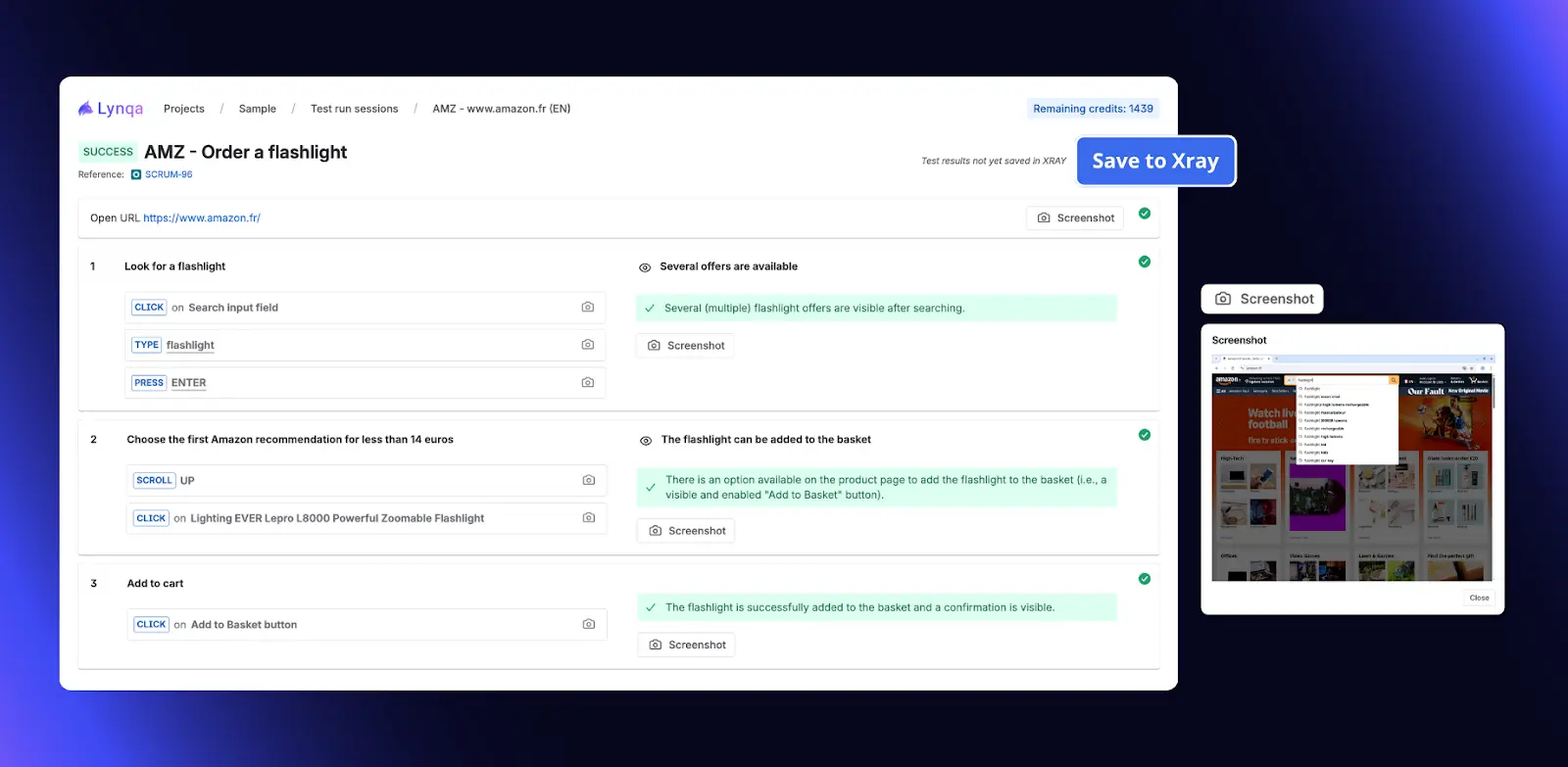

But agentic AI is turning this contradiction into a practical capability. At the heart of this shift is Lynqa, an AI agent designed to run manual tests directly on the GUI. It understands test procedures, navigates the application interface, verifies results, and delivers a detailed execution report without a single line of automation code.

The Sprint Bottleneck: Why “Manual” Needs a Boost

Every QA team knows the pattern: as the sprint nears completion, the volume of manual checks piles up, testers are stretched thin, and feedback to developers slows to a crawl. This is the “Manual Testing Debt.”

The usual responses, hiring more testers or rushing to automate new features, rarely work within a single sprint. Traditional test automation simply takes too long to develop alongside the feature it’s meant to validate.

This is where GUI AI agents change the game.

What Are GUI AI Agents?

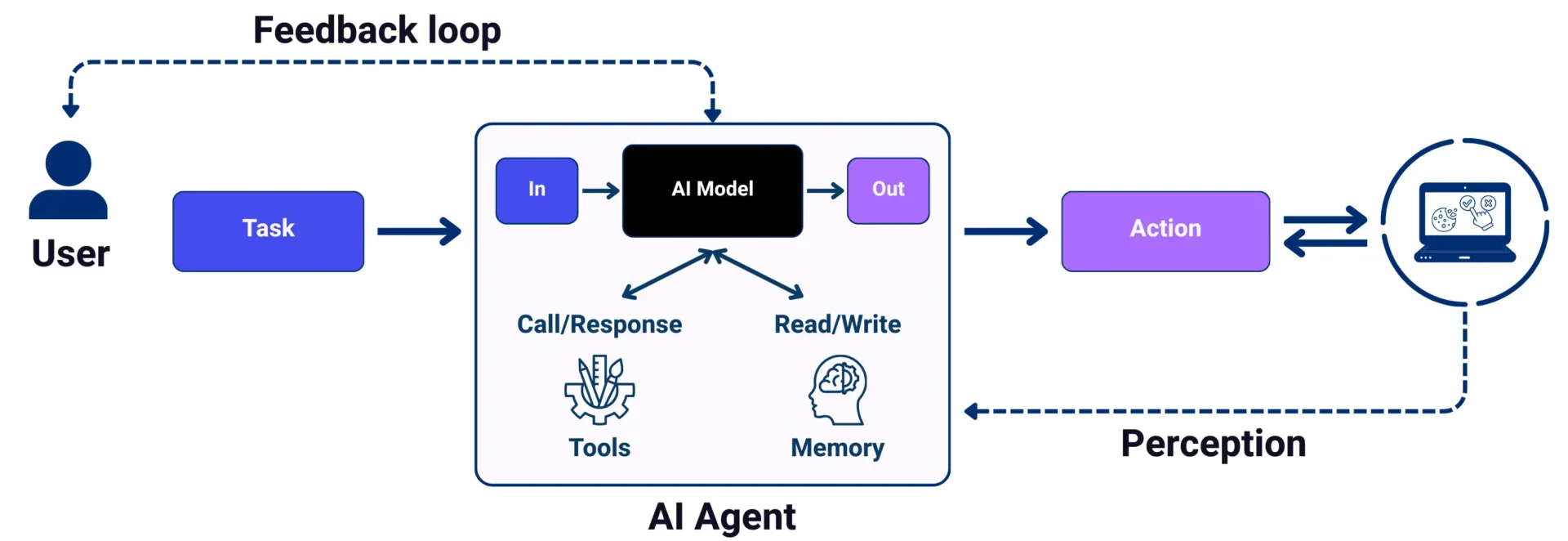

GUI AI agents interact with software by visually interpreting screen interfaces and operating a virtual mouse and keyboard. Instead of relying on backend APIs, these AI agents execute complex digital workflows exactly as a human user would:

- GUI-Based Actions: It interacts with the graphical interface exactly as a human would, opening browsers, scrolling through menus, filling in fields, and navigating complex clients such as ERP systems.

- Visual Perception: It monitors progress by analyzing the screen at each step, identifying the areas affected by an action or verification.

The Feedback Loop: Thought and Action

The agent operates in a continuous feedback loop that combines reasoning with action:

- Interpretation: It reads the test steps and builds a logical action plan.

- Perception: It analyzes the current screen to decide which interaction (click, type, drag) to perform.

- Monitoring: After every action, it checks the UI state to verify the expected result.

- Communication: If the agent encounters an ambiguity or a high-risk step, it pauses to ask the user for clarification before proceeding.

This means the agent doesn’t blindly follow a script; it reacts to the application’s actual behaviour.

Lynqa: Agentic AI Inside Your Test Management Tool

While general-purpose AI agents are appearing in consumer chatbots, Lynqa for Jira is purpose-built for the testing ecosystem. By integrating directly into Jira/Xray, it transforms how teams handle manual workloads within a sprint.

How it speeds up the cycle:

- Zero Scripting Overhead: If you have a written test case or a clearly defined User Story, the agent can execute it immediately, without waiting for an automation engineer. This enables “Day 1” testing of new features.

- Adaptive Execution: When a button moves, a CSS class is renamed, or a color shifts, traditional automation breaks. The AI agent sees the “Submit” button regardless of underlying code changes, removing the maintenance tax that halts progress.

- Analysis-Ready Reporting: The agent doesn’t just return “Pass” or “Fail.” It provides step-by-step screenshots and a detailed failure analysis, giving testers actionable information immediately.

Start your experience with Lynqa today! We offer 10 free credits upon installation, or you can book a demo with our team. Find more information here.

A Collaborative Future: The QA “Tribe”

At EuroSTAR, we talk about “finding your tribe” and sharing the passion for quality. AI agents aren’t here to replace human testers; they’re here to empower the tribe.

The most effective teams use agents like Lynqa as extra hands. While the agent handles repetitive GUI-level checks and initial failure analysis, human testers reclaim time for the work that matters most: exploratory testing, risk analysis, and strategic quality coaching for the development team.

The tester’s role evolves from executor of steps to orchestrator of agents. As we look toward the 2026 EuroSTAR Conference, the question is no longer whether manual tests can be automated; it’s how quickly your team can leverage agentic AI to keep pace with modern delivery.

Author

Bruno Legeard, Smartesting

Bruno Legeard heads up the AI Lab at Smartesting (publisher of Lynqa). An expert in AI for software testing, he is one of the authors of the ISTQB CT-GenAI certification “Testing with Generative AI.”

Meet the Lynqa team at the Smartesting booth during the EuroSTAR 2026 Conference to see agentic testing in action!

Smartesting are Exhibitors at EuroSTAR 2026. Join us at EuroSTAR Conference in Oslo 15-18 June 2026