AI QA is reshaping software testing by bringing intelligence into every stage of the development lifecycle. By combining AI and machine learning, QA teams are moving from brittle automation to adaptive, predictive strategies that catch bugs earlier, reduce test maintenance, and speed up releases.

This post breaks down how Artificial Intelligence (AI) in Quality Assurance (QA) is transforming software testing from smarter test case generation and faster defect prediction to continuous optimization. You’ll also see how teams can start applying AI in practical ways.

What is artificial intelligence in quality assurance?

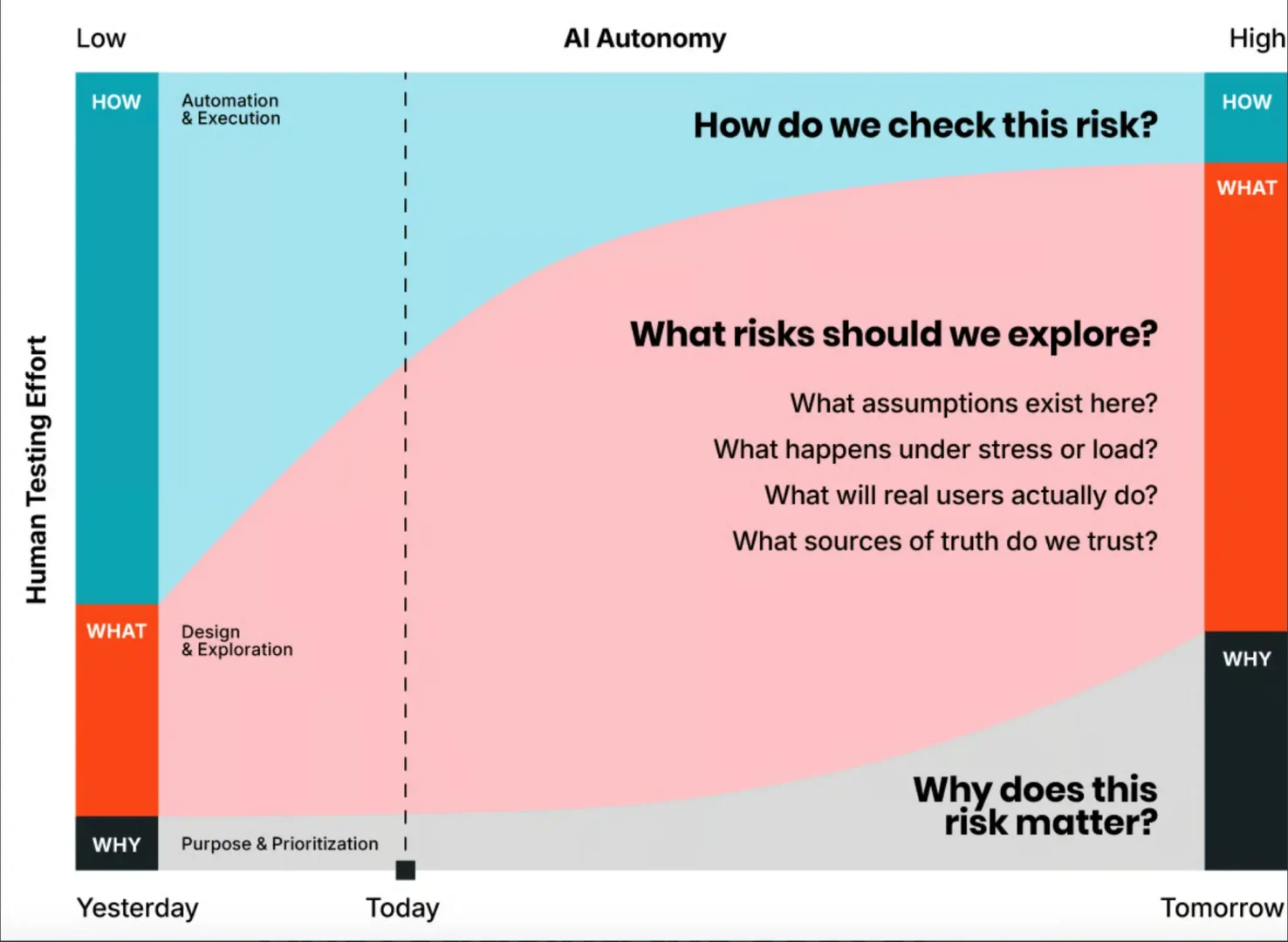

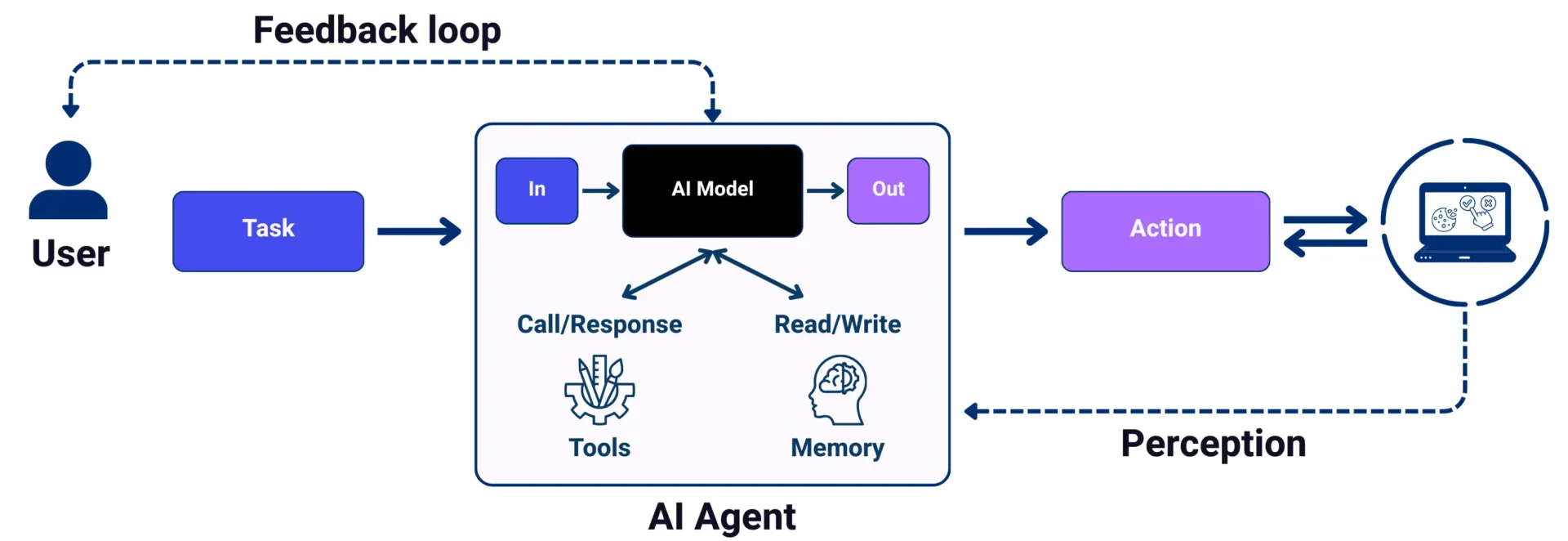

AI QA refers to the integration of AI and machine learning (ML) into quality assurance workflows. Practically speaking, it’s about using AI to take repetitive tasks off the QA team’s plate, giving them more time to focus on activities that require human insight, like exploratory testing or evaluating edge-case behavior.

A few of the jobs AI QA can perform include:

- Generating test cases based on user behavior, system logs, requirement, or recent code changes.

- Predicting failure points by analyzing historical defect data, commit patterns, and code complexity.

- Triaging bugs automatically using NLP (Natural Language Processing) to group related issues, flag duplicates, and suggest likely root causes.

- Prioritizing test execution based on risk scores, code velocity, and business-critical areas to reduce unnecessary test cycles.

- Maintaining and evolving test suites by identifying outdated tests and generating new ones in response to product changes.

Why do QA teams need AI now?

Modern QA teams aren’t lacking tools. They’re lacking time, visibility, and actionable insights. Release cycles are getting shorter, systems are more complex, and user expectations continue to rise.

Here’s how that pressure shows up in practice:

- Tests are running, but the value is unclear.

- Automation is fragile and fixing brittle test scripts often takes time away from coverage.

- Bugs still make it to production when testing isn’t aligned with real-world risk.

- QA becomes a bottleneck when teams are expected to sign off without enough time, data, or confidence.

- Leadership can’t measure impact without clear metrics.

AI QA helps solve these problems by enabling teams to work smarter, not harder. Instead of adding more scripts or expanding headcount, AI reduces waste and helps teams zero in on what really matters. The result is faster feedback and higher-quality releases.

How AI is helping teams improve efficiency

Here are five ways AI QA is helping teams improve efficiency and focus on what matters most:

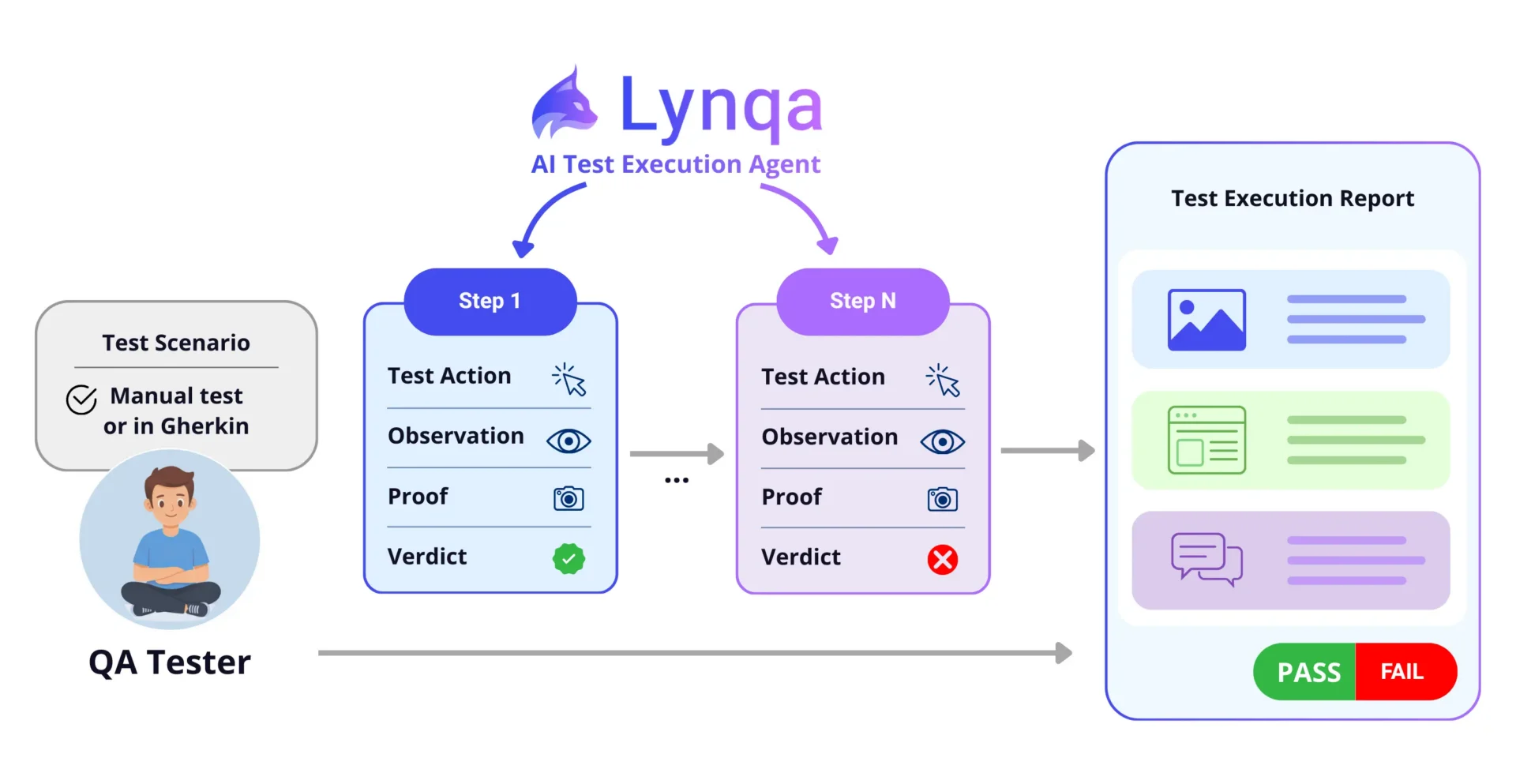

- Smarter test generation: AI tools analyze usage patterns, code changes, and defect logs to automatically generate test cases, saving time and improving test coverage.

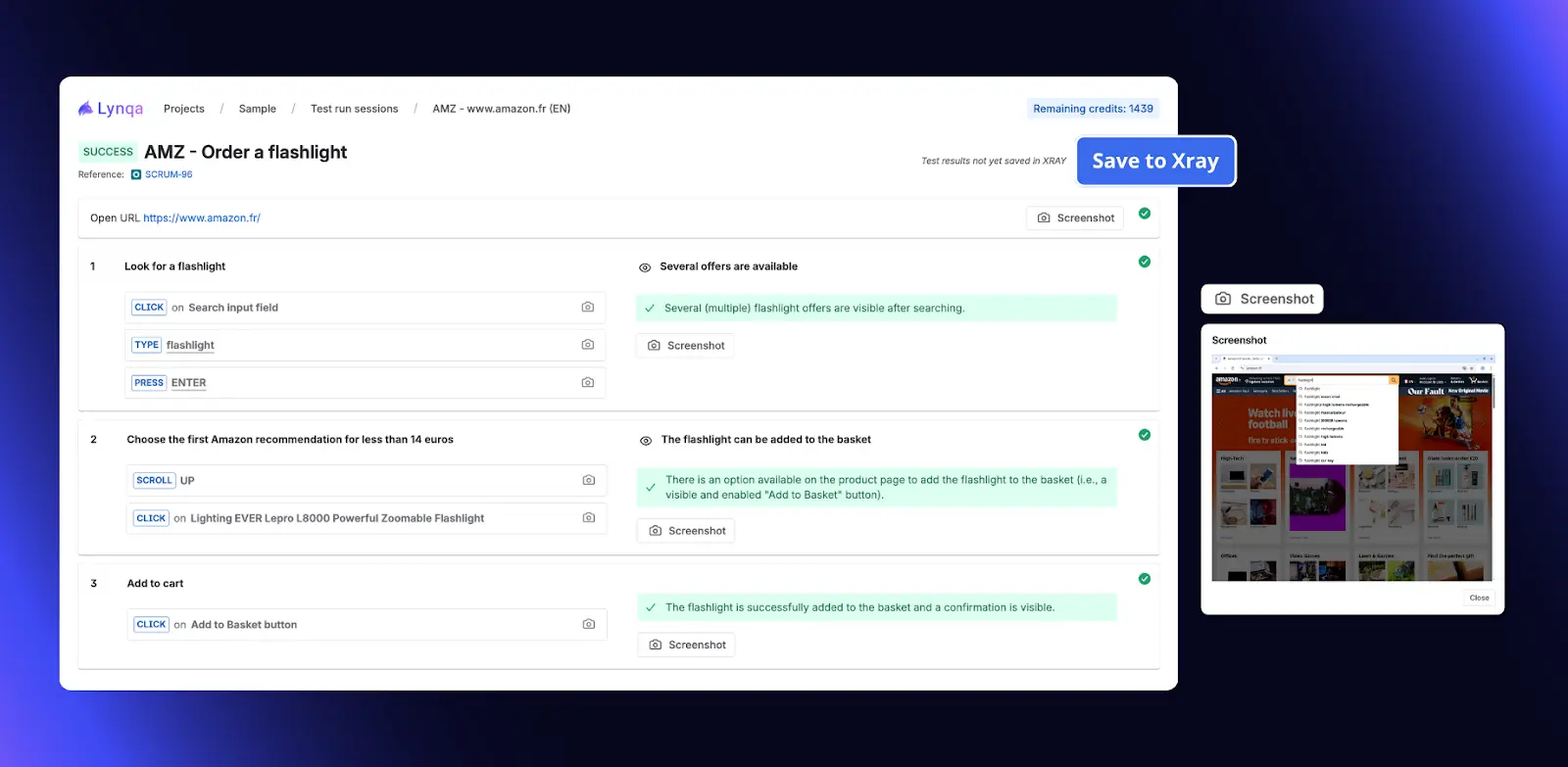

With TestRail AI, you can generate first-draft test cases from your requirements text, then review and refine them before adding them to your suite.

- Faster defect prediction: By modeling factors like code churn, commit frequency, and historical defect density, AI highlights high-risk areas before issues reach staging or production.

- Intelligent bug triage: Using NLP, AI groups related bugs, flags duplicates, and suggests likely owners, helping teams resolve issues faster and reduce backlog noise.

- Risk-based test prioritization: Rather than running every test on every build, AI assigns risk scores and ranks test cases based on business impact, recent changes, and failure likelihood.

- Continuous test suite maintenance: AI flags outdated/redundant tests to reduce false positives and maintenance overhead.

The strategic edge: QA leaders are tracking and testing

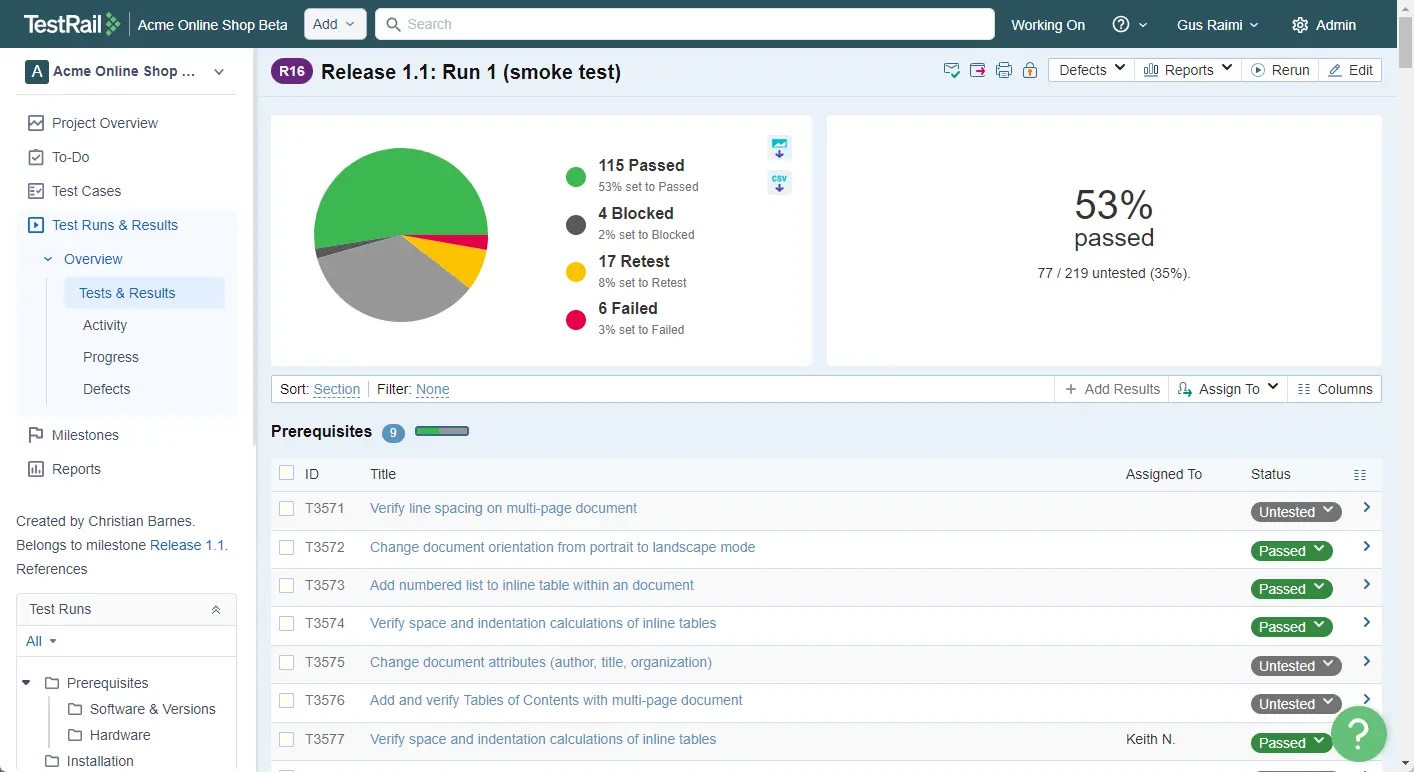

QA teams are taking a more strategic approach. They’re seeking better visibility into what’s working, what’s wasting time, and where risk is hiding. AI is helping leaders track metrics like:

- Test debt velocity: How quickly are tests becoming outdated, and how does that affect confidence in test results?

- Risk-based test ROI: Which tests are consistently catching critical bugs—and which ones are just noise?

- AI vs. manual performance: How do AI-generated tests compare to manual ones in terms of defect yield and maintenance cost?

- Suite stability trends: Where is test flakiness increasing, and what are the patterns behind it?

How can my team start using AI in QA?

Start small with efficiency. The most effective teams begin by identifying where AI can have the greatest immediate impact and then build from there.

- Map your friction. Where are you losing speed or confidence today?

- Pick one high-leverage use case. Flakiness detection and test generation are great entry points. One simple starting point is using TestRail AI to draft test cases from requirements, then standardizing them with a human review step.

- Choose transparent tools. Make sure your AI doesn’t introduce black-box risk.

- Connect everything to TestRail. Use it as your system of record to track, trace, and manage your evolving strategy.

How TestRail helps teams create an AI QA strategy

AI QA tools can generate tests, flag risks, and optimize execution, but they work best within a structured system. TestRail brings those insights all together, helping teams turn AI QA into a repeatable strategy that scales across teams and release cycles.

Here’s how TestRail works for AI QA:

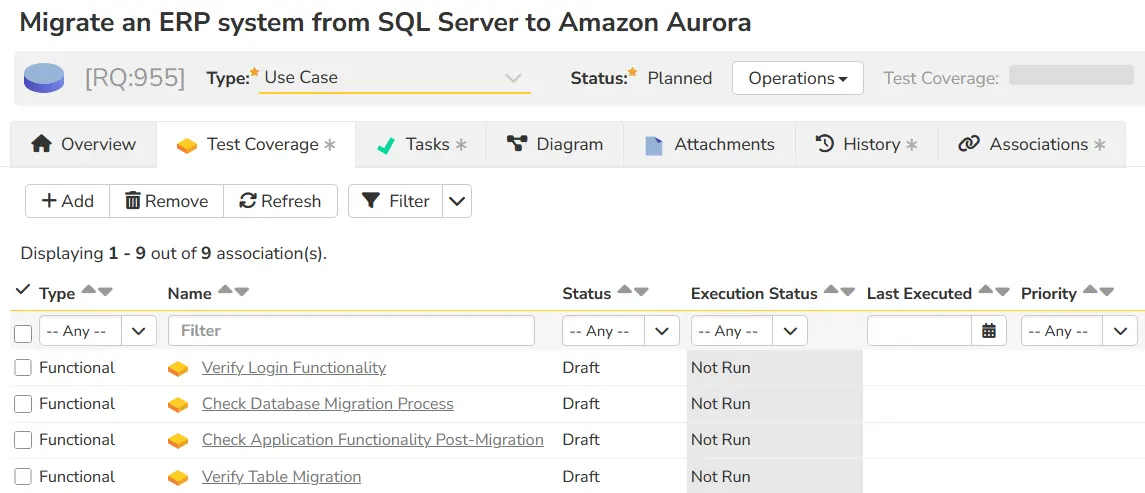

- Track and generate tests in context: Use TestRail AI to draft test cases from requirements text, then manage AI-assisted and manual tests together with full visibility into history, execution, and ownership.

- Visualize test coverage by risk: Filter by release, component, or risk category to see gaps and trends.

- Centralize automated results: Connect TestRail to your automation and CI/CD pipeline to centralize reporting across automated test runs.

- Maintain end-to-end traceability: Link test execution to requirements, defects, and user stories for complete accountability.

- Report with clarity: Use dashboards and custom reports to surface performance trends, identify bottlenecks, and share QA impact across teams.

TestRail is built so that speed is measurable. Even as complexity grows and scales, your team stays in control.

Integrate a streamlined workflow with TestRail

Quality assurance measures real-world risk and complexity. Platforms like TestRail let you leverage AI QA without losing visibility, giving you tighter feedback loops and more confident releases. See it first hand, start your free 30-day trial today.

Author

Patrícia Duarte Mateus

With more than a decade of experience in Software QA and expertise in several business areas, Patrícia Duarte Mateus has a QA mindset built by the different roles she has played—including tester, test manager, test analyst, and QA engineer. She’s Portuguese, living in Portugal, and is currently a Solution Architect and QA Advocate for TestRail. Patrícia is also a speaker, mentor and founder of a project whose objective is to demystify and educate on Software QA with a focus on Portuguese-speaking people, called “A QA Portuguesa”. Her areas of interest beyond QA include deepening her knowledge of psychology, tech, management, teaching/mentoring, health, and entrepreneurship. Books, podcasts, Ted Talks and YouTube are always on Patrícia’s to-do list to ensure a good day!

TestRail are a participating in EuroSTAR Conference 2026 as a Gold Sponsor. Join us at EuroSTAR Conference EXPO in Oslo 15-18 June 2026.